Archive page

Blog archive

Page 2 of 55

Paged archive

Posts

Page 2 of 55

- Kiwi founded shoe company Allbirds pivots to AI | RNZ News

A local tech commentator says Kiwi founded Allbirds' surprise pivot from making merino shoes to AI chips is not as crazy as it sounds. —…

- Silo — Season 3 Official Teaser | Apple TV - YouTube

https://www.youtube.com/watch?v=C9-_VVX9BvE The truth will surface. Silo returns July 3 on Apple TV — Silo — Season 3 Official Teaser |…

- Community Letter from Tim - Apple

This is not goodbye. But at this moment of transition, I wanted to take the opportunity to say thank you. Not on behalf of the company,…

- Building Locabulary.fun with Replit: Big Promises, Real Learning

I started Locabulary.fun with two simple goals: to recreate a verbal geography game I’d been playing with my kids at the dinner table, and…

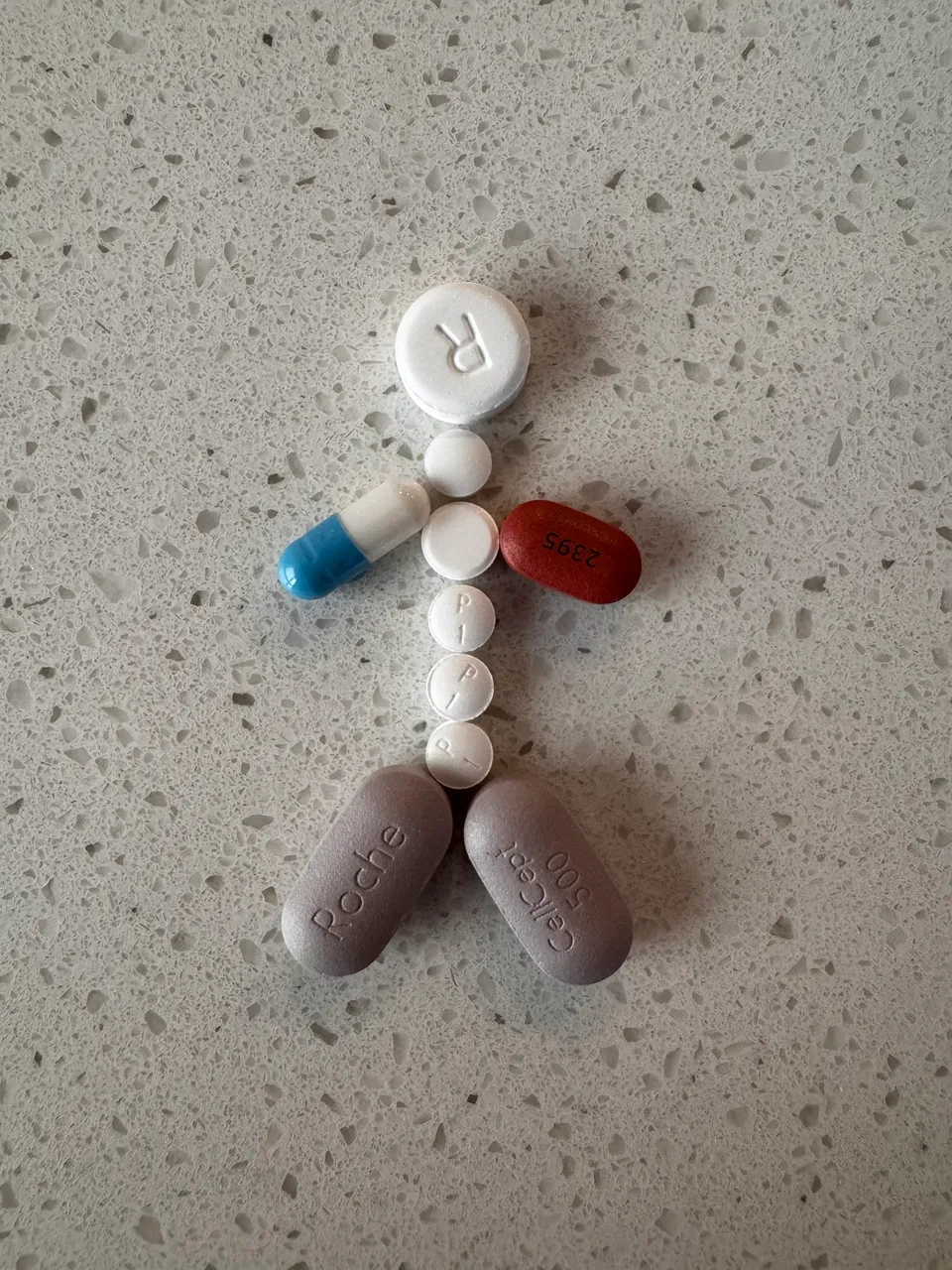

- One Year Later: Building From the Inside Out

A year ago, everything was loud. Cardiac sarcoidosis. An ICD implant. Meds that keep me alive while simultaneously wrecking my sleep, my…

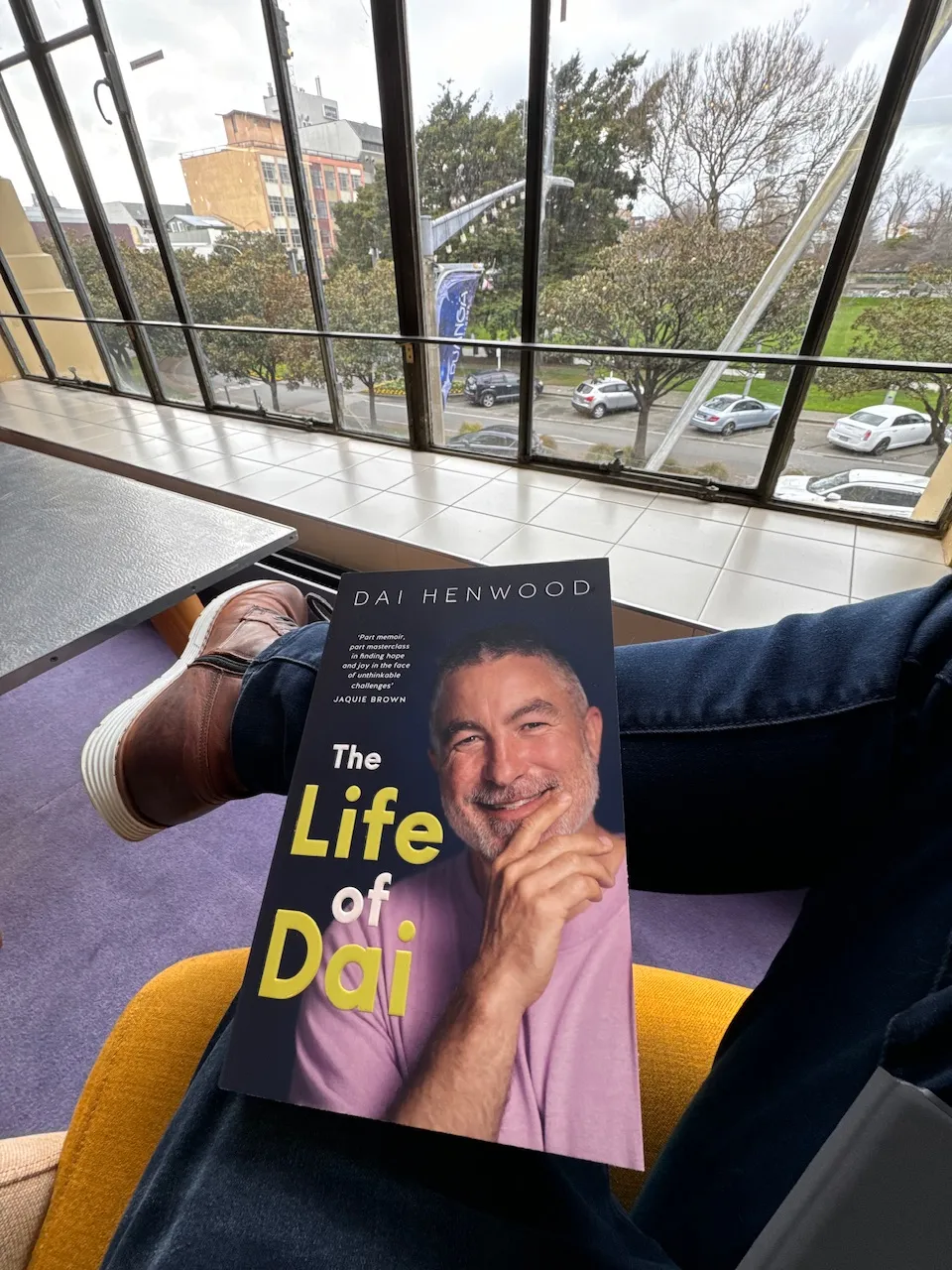

- The Hard Part: Navigating Emotional Turmoil

I nearly had a massive ugly cry in the local public library. I'd just started reading "The Life of Dai," which beautifully captures the…

- A Surreal Journey: From Head Rushes to Heart Surgery

So, this post is a bit different from the norm, but I think it will do me good to write it, so here we are. April 18th, 2024, was a bit of…

- Ternary! ☘️

If you find yourself with a conditional in the form on an if else, there's a more concise way of writing it. Say hello to a ternary. Take…

- Dough game

Working on my gluten-free pizza dough game. After 5 hours of prep (probably 20 mins of effort) I'm fairly happy with the results. I'm…

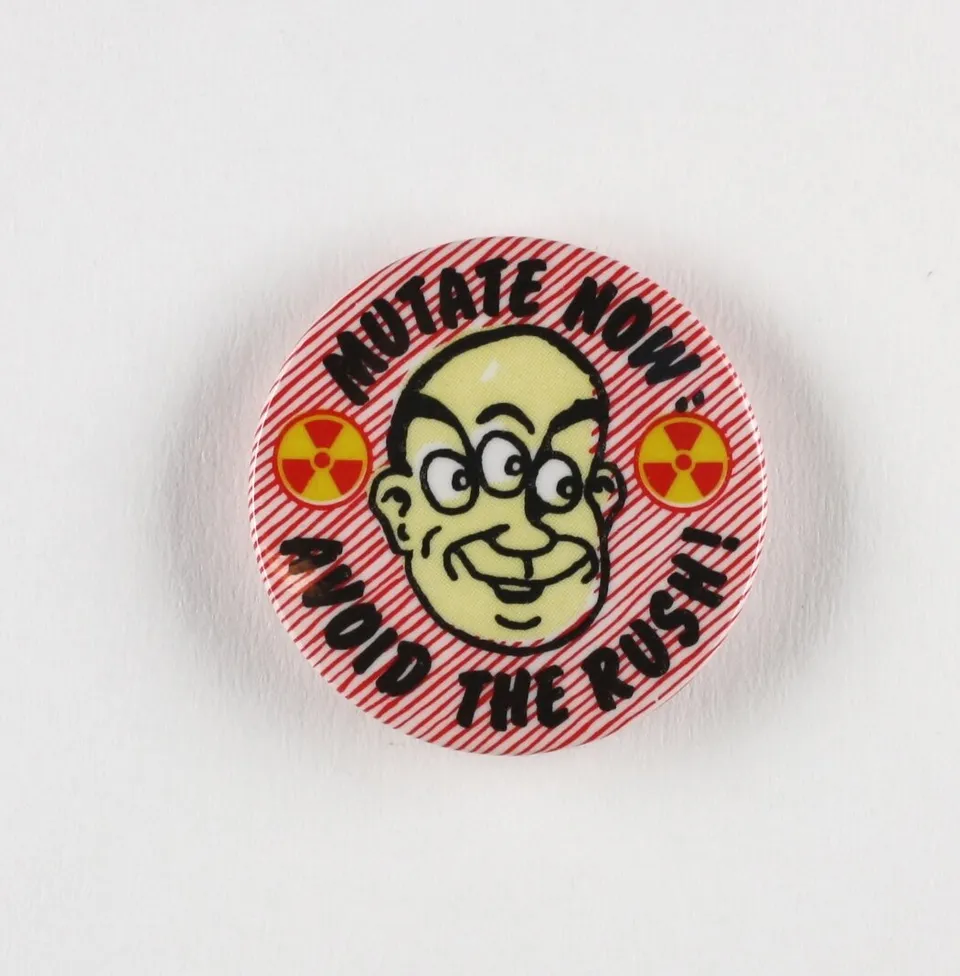

- Mutation === 💩

One of the many things I've picked up from the excellent Syntax podcast over the years is mutation should be avoided. Today I came across a…