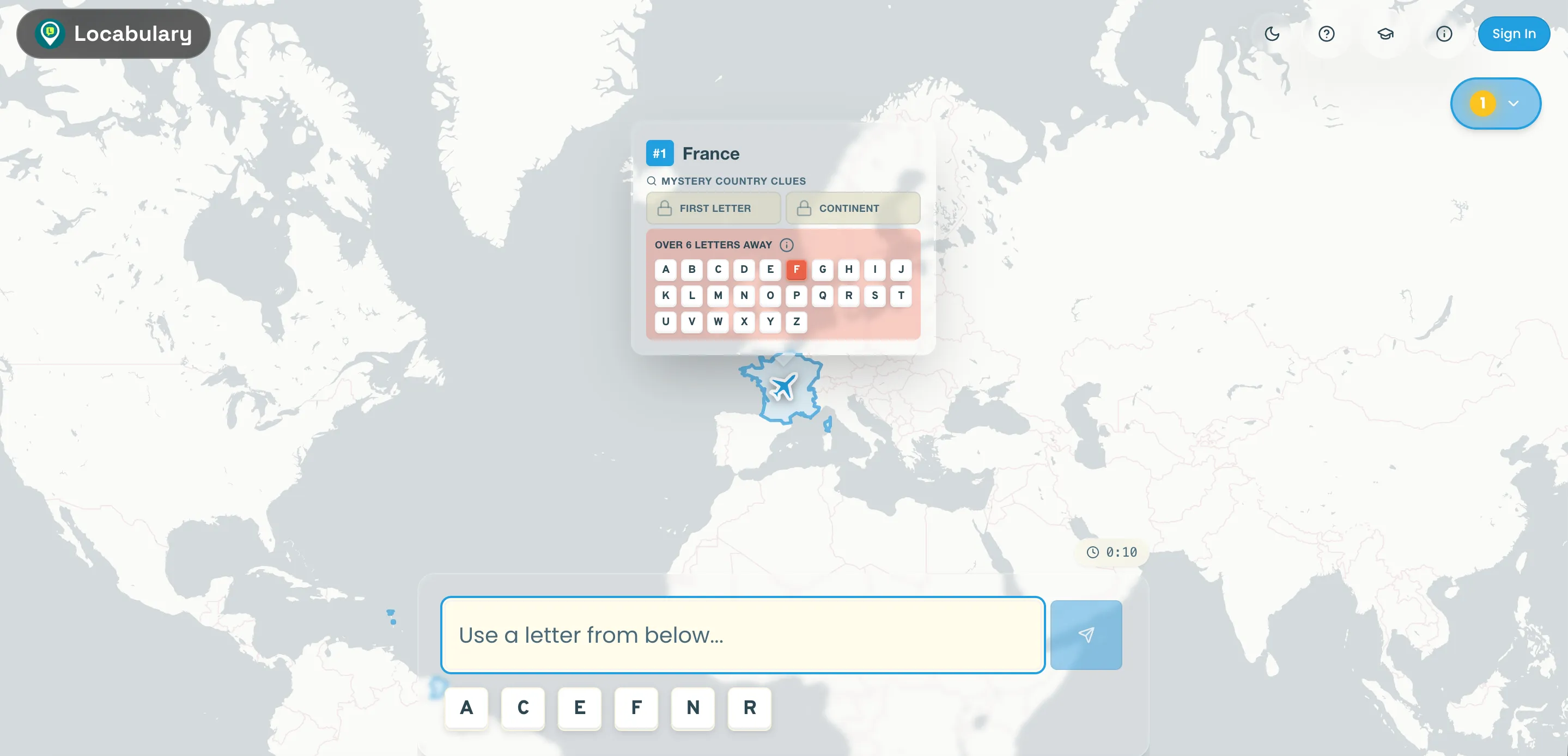

I started Locabulary.fun with two simple goals:

to recreate a verbal geography game I’d been playing with my kids at the dinner table, and to grow my skills in AI-driven development.

That second goal is exactly why I chose Replit to get started. I wanted to open a browser tab, begin building immediately, and avoid the usual local setup rabbit hole. No “just one more config tweak.” No yak shaving. Just idea to prototype. Quickly.

And to be fair, that part worked very well.

The speed was real

The first version came together embarrassingly fast. I could build features, try them immediately, and keep momentum going. That loop (think, build, run, adjust) felt tight in a way that traditional workflows sometimes don’t, especially when a project is still undefined.

For early product discovery, that speed matters more than perfection. A rough thing you can interact with beats a beautiful architecture diagram every day of the week.

It also changed my behaviour. I found myself experimenting more and hesitating less. Instead of trying to predict every edge case up front, I’d test an idea and see what happened. Some ideas failed quickly. Good. Better that than carrying bad assumptions for weeks.

Then the messy bits showed up

Once Locabulary started to feel “real” (rather than “just a prototype”), the harder problems arrived right on schedule.

The challenge wasn’t getting code to run. It was confidence.

Could I trust what was generated? Did this change subtly break something else? Was I improving the product, or just layering on accidental complexity one clever suggestion at a time?

This is where the glossy promise of AI-assisted software delivery starts to wobble. The pitch is seductive: describe what you want, get most of the implementation for free, move at speed. Sometimes that’s true. But speed without clarity becomes expensive very quickly, especially if you haven’t built software the “old fashioned” way before.

I found myself spending more time verifying than creating. Reading diffs carefully. Tracing behaviour. Rewriting code that looked fine at first glance but revealed brittleness after a few iterations.

None of this is dramatic, but it is real work. Important work.

I also learned quickly how much working with the agents mattered, how I structured prompts, developed agent skills, and constrained behaviour with hooks, and guided outputs, to maintain both speed and quality.

AI doesn’t remove engineering, it reshuffles it

The biggest lesson wasn’t “AI is amazing” or “AI is overhyped.” It was simpler:

AI shifts where the effort lives.

You do less boilerplate and manually recreating patterns over and over. That’s nice.

But you do more work designing constraints for your agents, reviewing outputs, maintaining architectural discipline, and making product decisions. You still need taste. You still need standards. You still need to know when to say, “That’s clever, but wrong for this app.”

In that sense, Replit/AI felt less like autopilot and more like having an enthusiastic team who never gets tired, and occasionally hallucinates. Very useful, but still requiring direction.

For Locabulary.fun, the wins were clear: faster starts, more experimentation, fewer barriers to getting something live. The trade-off was needing to be stricter about boundaries, naming, and testing much earlier than expected.

Where I’ve landed (for now)

I still think tools like Replit are a big deal, especially for solo builders and small teams validating ideas. They reduce the “distance to first useful thing,” and that matters.

Now that I’m past the proof of concept stage, I’ve moved away from Replit and into a more traditional setup using Visual Studio Code and GitHub Copilot.

I’m still not writing code manually for this project, but I am using more capable tools for this stage. That shift feels appropriate.

Locabulary.fun is still evolving. Still imperfect. Still teaching me things. There are bugs. Some parts aren’t where I want them to be yet.

But the core point is simple: without this tooling, I likely wouldn’t have built this at all.

The promise of AI in software development is real, just not in the magical, one-click way it’s often presented.

For me, the real promise is this: enabling things to be built that otherwise wouldn’t exist. Getting further, faster, while still taking responsibility for what you put in front of people.

That part hasn’t changed. It probably shouldn’t.